Organizations handling massive datasets face an escalating threat landscape. Cyberattacks targeting big data infrastructure have grown more sophisticated, with breaches exposing millions of records annually.

At Scan N More, we’ve seen firsthand how inadequate security frameworks leave companies vulnerable. This guide walks through proven protection strategies that actually work.

What Actually Threatens Your Big Data

The Attack Vector That Works

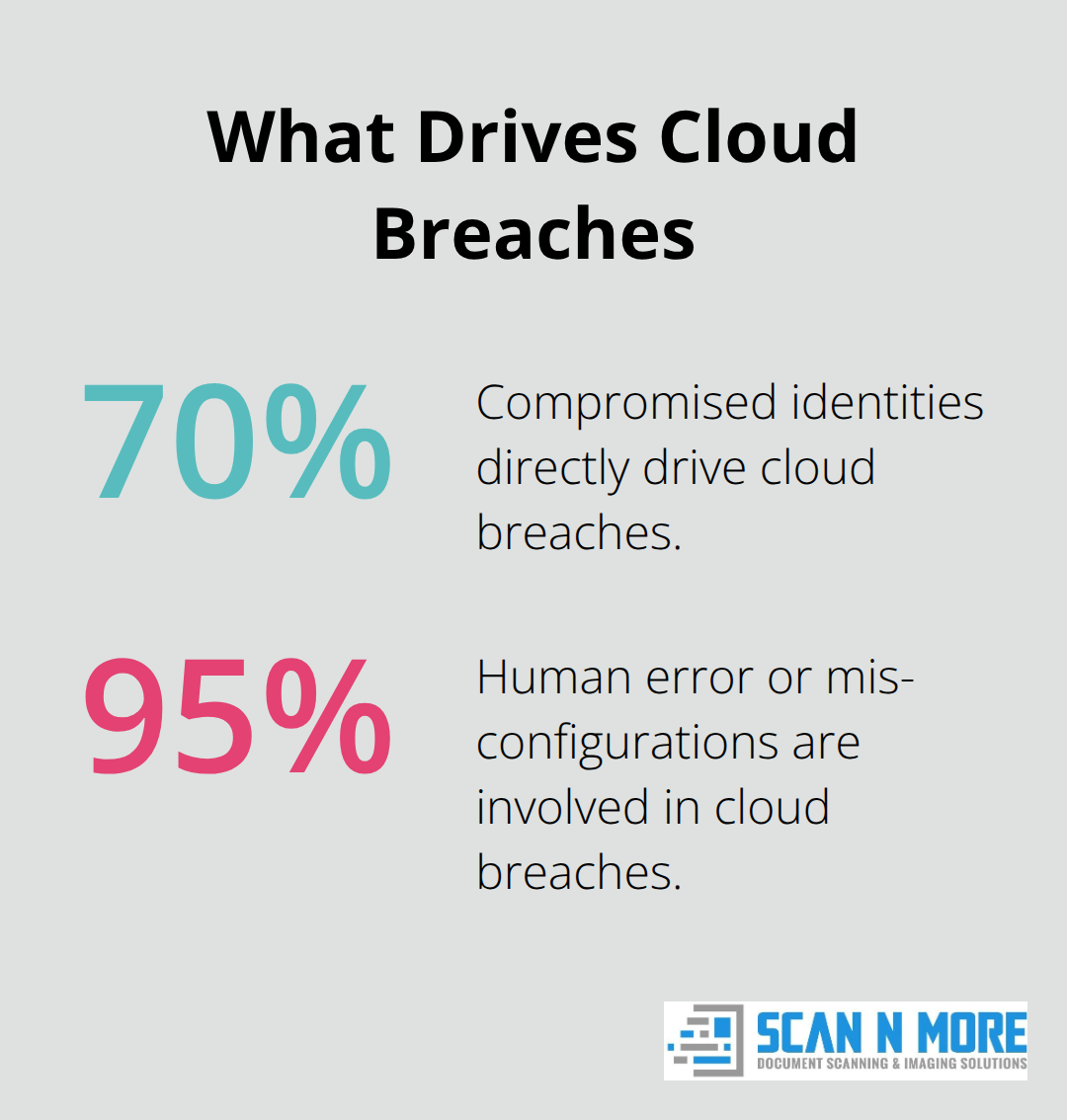

Data breaches involving large datasets have become routine. In 2025 alone, organizations experienced roughly 1,968 cyber attacks per week, with human factors driving 74 to 95 percent of those incidents. Stolen credentials remain the dominant attack vector according to industry data. Attackers exploit this relentlessly because it works-compromised identities give them immediate access to massive repositories of customer records, transaction histories, and proprietary information.

Cloud environments amplify this risk significantly. About 70 percent of cloud breaches stem directly from compromised identities, while an additional 95 percent involve human error or misconfigurations. Organizations storing petabytes of data across distributed systems face a hard reality: secure every access point or accept the likelihood of breach. Most choose poorly.

The vulnerability lies not in the technology itself but in how teams deploy and manage it.

Unencrypted data in transit, weak authentication protocols, and delayed patch management create exploitable gaps that persist for months. Organizations can reduce dwell time by actively hunting for threats and monitoring for suspicious behavior. During that window, they exfiltrate massive volumes of information. The cost of this negligence compounds quickly-stolen personally identifiable information commands about $200 per record on dark markets, so a dataset of one million records represents a $200 million liability.

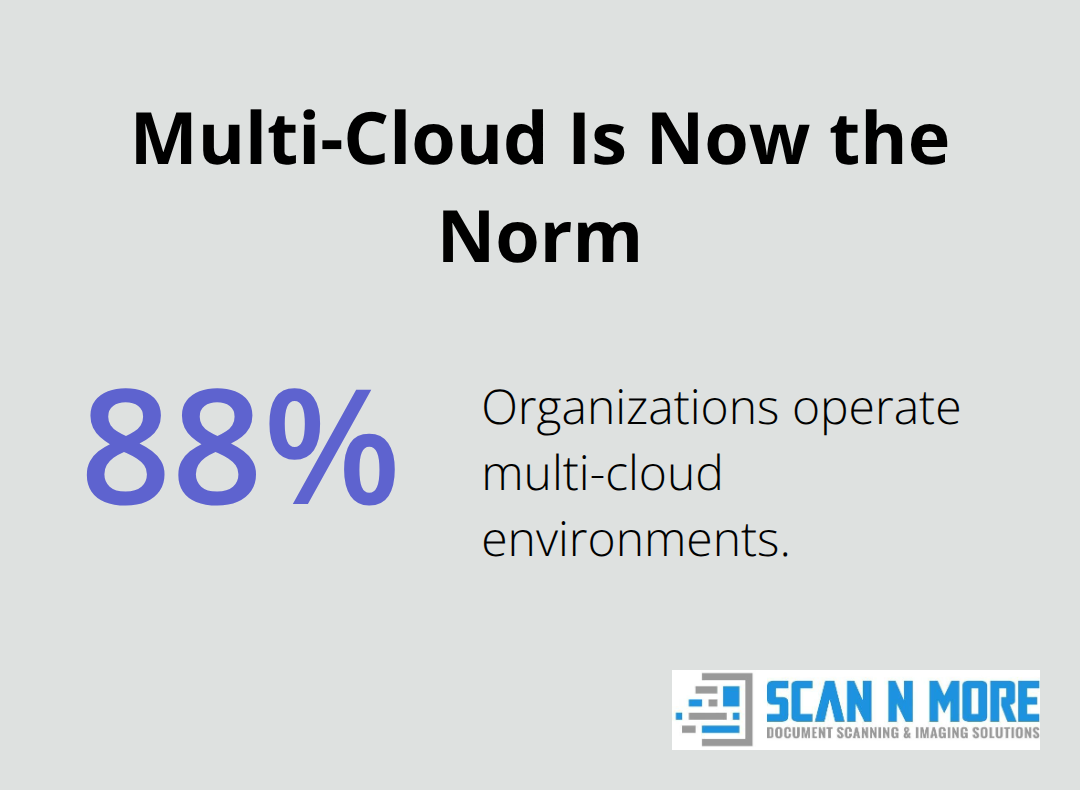

Fragmentation Across Multiple Clouds

Data storage and processing systems introduce complexity that most organizations underestimate. Multi-cloud and hybrid cloud environments are now standard, with 88 percent of organizations operating across multiple cloud providers and 29 percent using three or more simultaneously. This fragmentation creates visibility gaps and inconsistent security controls across platforms.

Encryption must span relational databases, NoSQL clusters, and Hadoop-like systems, yet many teams apply encryption inconsistently or skip it entirely for data deemed low-risk. Stored data requires encryption at rest plus strong authentication and intrusion detection across distributed server clusters, but the sheer scale makes routine audits impractical. Output data-the results delivered to applications and reports-often lacks equivalent protection, exposing sensitive information through analytics dashboards and exported files.

The Insider Risk Problem

Insider and administrative access present another critical failure point. Teams rarely monitor privileged user activity continuously, allowing bad actors and negligent employees to extract data without triggering alerts. Newer technologies like NoSQL databases and unstructured analytics introduce security gaps that legacy tools cannot address. Organizations that deploy these systems without updated security controls essentially guarantee compromise.

The IMF projects global cybersecurity spending will reach approximately $240 billion in 2026, yet breaches continue accelerating because spending concentrates on detection rather than prevention. Identity-centric security and zero-trust architecture stop attacks before they begin, whereas detection-only approaches guarantee that breach costs multiply across incident response, regulatory fines, and reputation damage. The next section examines the protection strategies that actually prevent unauthorized access from happening in the first place.

How to Actually Protect Big Data at Every Stage

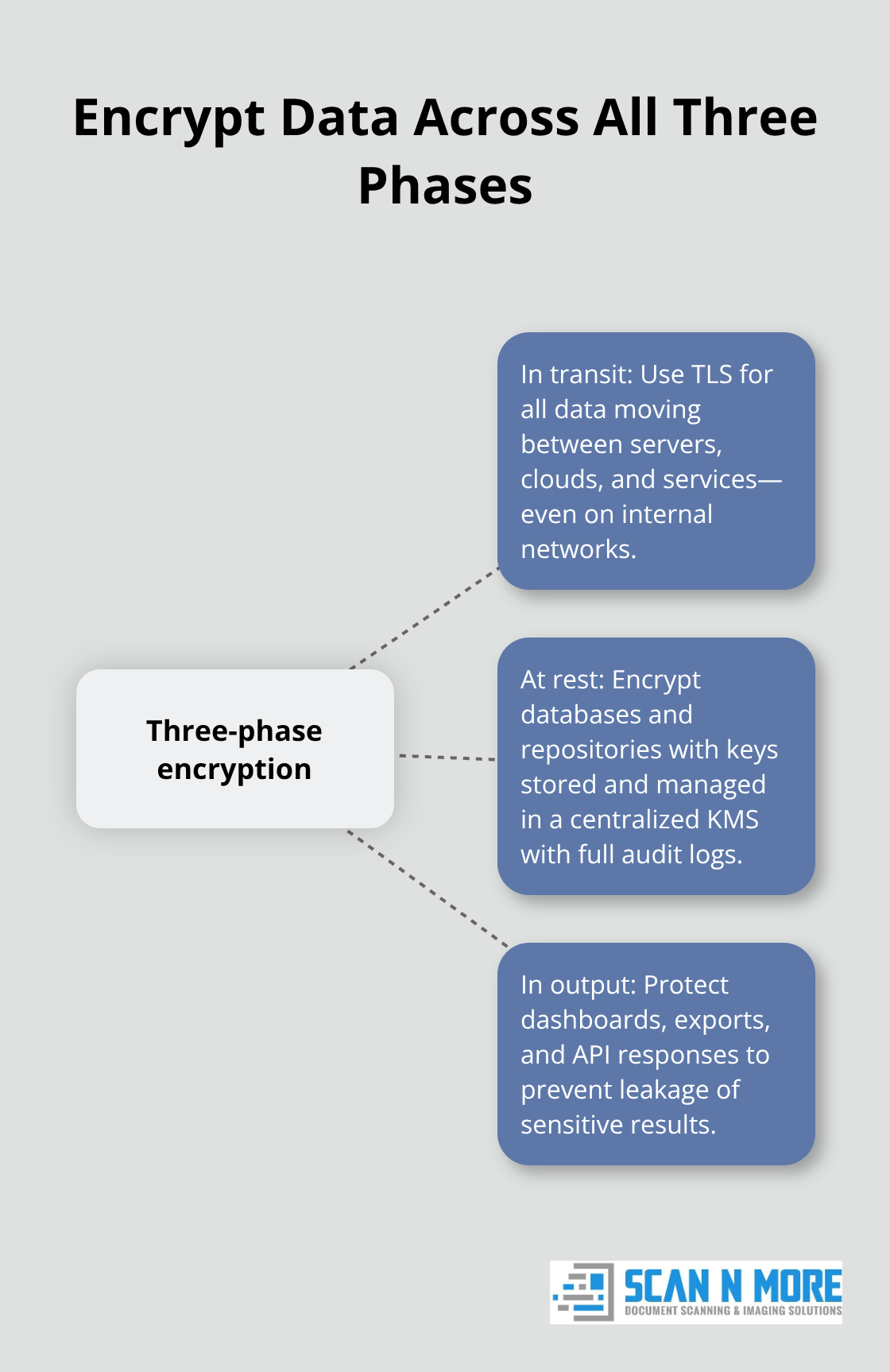

Encrypt Data Across All Three Phases

Encryption forms the foundation of data protection, but implementation determines whether it works or fails. Organizations must encrypt data during transit between systems, while stored in databases and cloud repositories, and when output to applications or reports. Teams that encrypt only one phase create exploitable gaps. Transit encryption protects data moving between servers and cloud providers, yet many organizations skip this step for internal network traffic, assuming their firewall provides sufficient protection. It does not.

Stored data encryption requires keys managed separately from the data itself, using centralized key management systems that enforce policy-driven access and maintain detailed logs of every decryption event. Output encryption prevents sensitive information from leaking through analytics dashboards, exported files, or API responses. This three-stage approach sounds straightforward until organizations deploy it across relational databases, NoSQL clusters, and Hadoop-like systems simultaneously. The complexity multiplies when teams operate in multi-cloud environments where encryption standards differ between providers.

Start by conducting a data inventory that maps where sensitive information exists, how it flows through your systems, and which encryption methods currently protect it. Most organizations discover that 40 to 60 percent of their data lacks encryption at rest, creating immediate vulnerability.

Implement Strong Authentication and Access Controls

Access control determines who can view, extract, or modify data, making it the second critical defense layer. Stolen credentials remain the dominant attack vector because weak authentication allows attackers to masquerade as legitimate users. Organizations must implement multi-factor authentication as a baseline requirement, not an optional enhancement.

About 92 percent of security leaders plan to implement or are already implementing passwordless authentication methods that eliminate credential-based compromise entirely. These approaches use biometrics, hardware keys, or device-based verification instead of passwords, though careful risk management around on-device storage and liveness checks remains essential. Zero-trust architecture treats every access request as potentially hostile, requiring continuous verification of user identity, device health, and behavioral patterns regardless of network location. This approach stops the 70 percent of cloud breaches driven by compromised identities because attackers cannot exploit stolen credentials when every action requires real-time verification.

Monitor Privileged Users and Detect Threats

Privileged access management specifically monitors administrative users who maintain disproportionate control over data systems. Continuous activity logging for these accounts surfaces unusual extraction patterns, bulk downloads, or access to sensitive datasets outside normal working hours. Organizations that implement these controls reduce their breach likelihood substantially compared to teams relying on perimeter security alone.

The final defense involves threat detection systems that identify attacks in progress before exfiltration occurs. Cloud breach dwell time averages about 277 days according to industry data, meaning organizations have months to detect and stop attackers if they deploy continuous monitoring. Automated threat detection systems analyze millions of signals per second to identify anomalies earlier, reducing response times from weeks to hours. These systems function most effectively when they consolidate data from multiple sources (cloud access logs, database activity monitors, and network traffic analysis) into a single platform, eliminating blind spots created by fragmented monitoring tools.

Organizations that combine encryption, strong authentication, and continuous threat detection create layered defenses that attackers struggle to penetrate. Selecting the right data security products ensures these protection strategies integrate seamlessly into your infrastructure.

Building Your Security Framework in Practice

Classify Data and Assign Ownership

Organizations that enforce data governance policies see measurable improvements in breach prevention, yet most teams treat governance as a compliance checkbox rather than a working system. Governance means defining who accesses what data, under what circumstances, and with what audit trail. Start by classifying your datasets into sensitivity tiers: public, internal, confidential, and restricted. Each tier receives different encryption standards, access requirements, and monitoring intensity. A financial transaction database demands stricter controls than an internal employee directory, so your governance framework must reflect that distinction.

Assign data stewards who own each classification and make decisions about access requests rather than defaulting to broad permissions. Document these policies in writing and enforce them through technical controls-not just guidelines that people ignore. Organizations operating across multiple cloud providers must establish consistent governance rules across all platforms, which requires mapping your data landscape first.

Map Your Data Landscape

Identify where sensitive information flows, which systems process it, and which teams touch it. Most organizations cannot answer these questions without conducting a full data inventory, which typically takes 4 to 8 weeks depending on infrastructure complexity. This inventory becomes your governance foundation. Without knowing what data you exist and where it lives, your protection strategies fail because you cannot apply consistent controls.

The inventory process surfaces critical gaps in your current setup. You discover which databases lack encryption at rest, which cloud repositories operate without access logging, and which teams hold excessive permissions. This visibility transforms governance from theoretical policy into actionable security improvements.

Deploy Continuous Analytics for Threat Detection

Advanced analytics platforms transform raw security logs into actionable threat intelligence that prevents breaches before they occur. Deploy continuous analytics across database activity monitors, cloud access logs, and network traffic to detect extraction patterns that precede data theft. Unusual bulk downloads, access to sensitive datasets outside business hours, or queries that retrieve millions of records trigger automated alerts that your security team investigates immediately.

The ISC2 Global Workforce Study reports approximately 5.5 million cybersecurity professionals worldwide, yet one-third of security teams feel understaffed, making automation essential for organizations lacking dedicated analysts. Automated threat detection systems analyze millions of signals per second to identify anomalies earlier, reducing response times from weeks to hours.

Test and Document Incident Response Procedures

Implement incident response procedures that define exactly who responds to alerts, what information they collect, how they contain the incident, and when they notify leadership and regulators. Incident response plans must address both cloud-based and on-premises systems since 88 percent of organizations operate multi-cloud environments where response procedures differ between providers.

Test your incident response procedures annually through simulations that exercise your playbook against realistic breach scenarios-not theoretical exercises but actual reconstructions of how attackers move through your infrastructure. Organizations that conduct these tests reduce their breach detection time significantly compared to teams relying on untested procedures. Assign specific roles and responsibilities, establish escalation pathways, and document the four-day disclosure requirement mandated by the SEC for publicly traded companies, which means your response process must identify, contain, and notify affected parties within that timeframe.

Final Thoughts

Organizations that implement encryption, multi-factor authentication, and continuous monitoring prevent the breaches that cost millions in recovery expenses, regulatory fines, and reputation damage. The threat landscape intensifies throughout 2026 as attackers deploy autonomous AI agents that compress breach timelines from months to minutes, while ransomware costs reach approximately $74 billion and passwordless authentication adoption accelerates across the industry. Your team must act now by conducting a data inventory that maps sensitive information locations, assigning data stewards who enforce governance policies across all cloud providers, and testing incident response procedures through realistic breach simulations rather than theoretical exercises.

We at Scan N More understand that big data cyber security extends beyond digital systems to physical vulnerabilities that most teams overlook. Our document scanning services transform paper-based processes into secure digital solutions while ensuring compliance with encryption and access control standards, and our hard drive destruction service addresses the physical security component that protects your data infrastructure from unauthorized access. These services eliminate the paper and hardware vulnerabilities that attackers exploit when digital defenses alone prove insufficient.

The complexity of protecting massive datasets demands professional support and systematic execution of proven strategies. Organizations that strengthen their defenses today avoid the catastrophic costs that compound across years through avoided breaches, prevented regulatory violations, and preserved customer trust.