Big data analytics security issues are no longer a future concern-they’re happening right now. Organizations are storing more sensitive information than ever, and attackers are getting smarter about finding vulnerabilities.

At Scan N More, we’ve seen firsthand how quickly a security gap can turn into a costly breach. This guide walks you through the threats, the defenses, and the tools that actually work.

What Makes Big Data Analytics Vulnerable Right Now

The global data landscape expands at an unprecedented pace. Statista projects that the total amount of data created and consumed globally will reach 394 zettabytes by 2028, and this explosive growth has created a massive attack surface. Organizations store unstructured, structured, and semi-structured data across multiple locations-cloud platforms, on-premises servers, and hybrid environments. This fragmentation prevents consistent security controls from taking hold. Most companies lack visibility into where all their data actually lives, making vulnerability management a constant game of catch-up. Distributed analytics systems amplify the problem further. When data flows through Kafka, Spark, Hadoop, or similar frameworks, each processing step introduces potential attack vectors. Real-time analytics environments handling thousands of events per minute create security blind spots because traditional monitoring tools cannot keep pace with the velocity of modern data pipelines.

Where Breaches Actually Happen

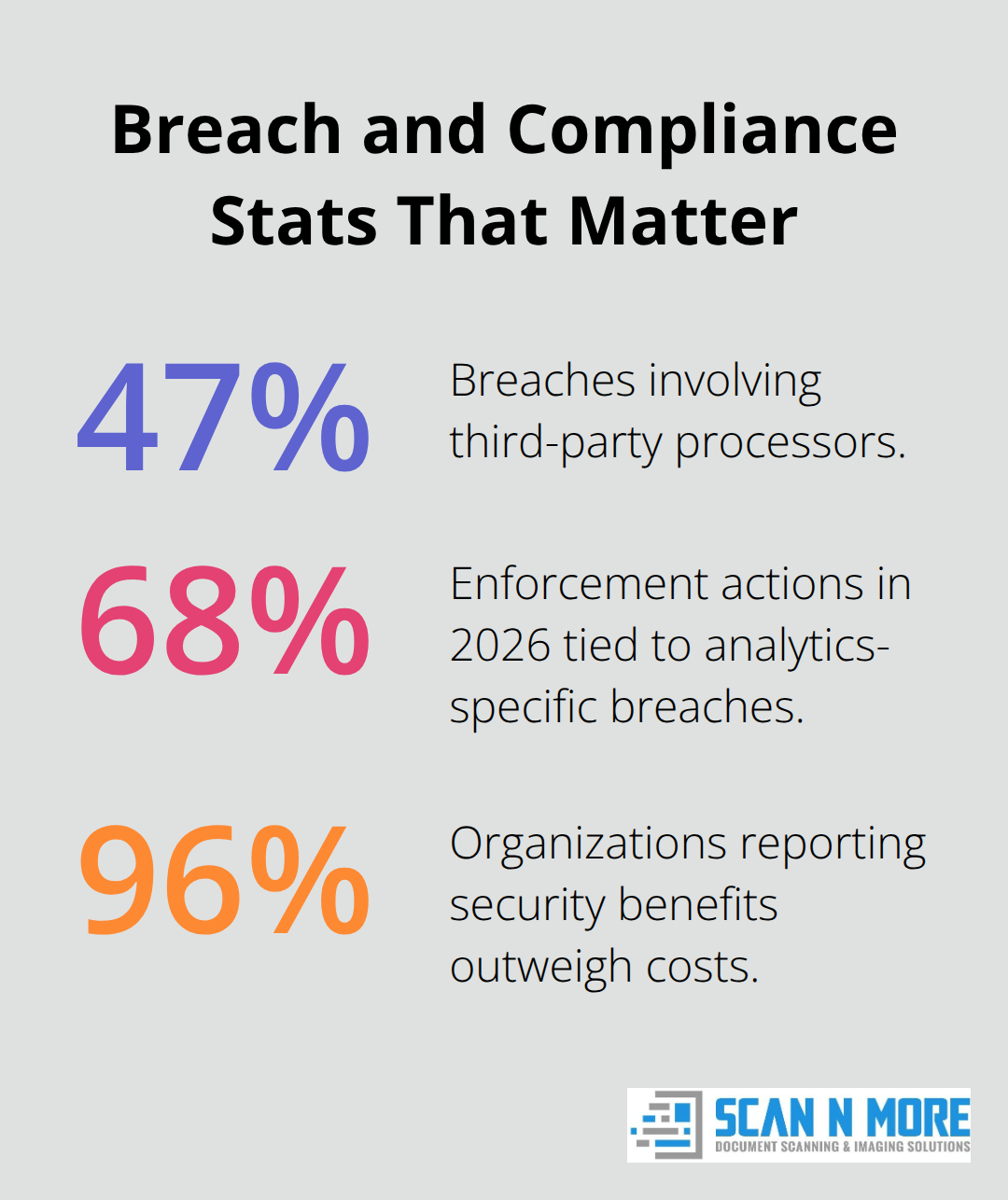

The IBM 2024 Cost of a Data Breach Report reveals that the global average cost of a data breach now stands at 4.88 million dollars, up from 4.45 million in 2023. Third-party processors account for 47 percent of all breaches according to Verizon’s Data Breach Investigations Report. This means your biggest risk often isn’t your own infrastructure-it’s the vendors and partners you’ve granted access to your data. Insider threats remain a significant concern, particularly in organizations with broad data access policies.

Attackers also exploit metadata and configuration files that organizations treat as non-sensitive assets. Exposed API keys, database credentials, and system details stored in poorly protected files provide attackers with direct pathways into your analytics environment.

Regulatory Violations Hit Hard

Regulatory violations have become shockingly expensive. Analytics-specific breaches accounted for 68 percent of 2026 enforcement actions, driven primarily by machine learning model training on non-consented data and A/B tests exposing personally identifiable information. Average penalties per incident run around 2.8 million dollars when you factor in remediation, legal fees, and stock impact, according to the DLA Piper GDPR fines tracker. Twenty U.S. states now enforce privacy statutes, and California’s amendments classify data from users under sixteen as sensitive, creating immediate compliance headaches for any organization collecting consumer analytics.

The Access Control Problem

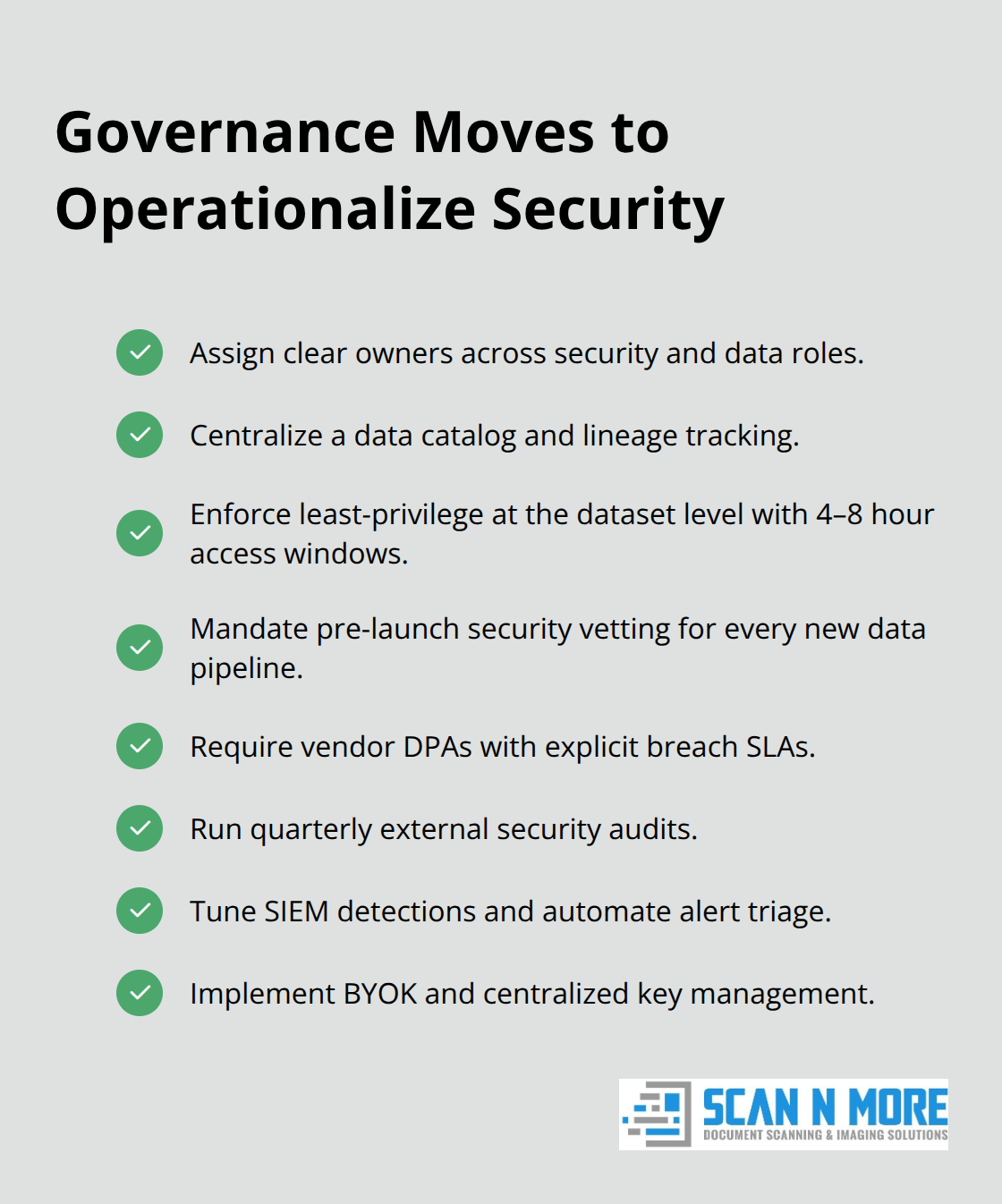

Role-based access control sounds straightforward in theory but becomes chaotic at scale. When data scientists need quick access to datasets for model training, when analysts request temporary elevated permissions, and when contractors require limited views of sensitive information, enforcement becomes inconsistent. The CxO Institute found that enterprise zero-trust architecture adoption doubled from 35 percent in 2020 to 70 percent in 2025, signaling a critical shift toward continuous authentication and least-privilege access. Yet most organizations implementing zero-trust only apply it to network infrastructure, not to their analytics environments. Dataset-level access controls with short-lived access windows (four to eight hours) and comprehensive query logging remain rare outside of forward-thinking enterprises.

Shadow IT and Orphaned Data

Shadow IT in big data projects creates unsanctioned tools and data pipelines that bypass security controls entirely. Data engineers spin up temporary extracts for testing, analysts build ad-hoc dashboards without approval, and contractors use personal cloud accounts to process data. These shadow data copies often persist long after their purpose expires, creating orphaned datasets that attackers can exploit. The solution demands centralized pipeline creation with mandatory security vetting before any new data flow goes live. Organizations that establish this control eliminate the rogue infrastructure that typically accounts for the largest portion of unmanaged risk. What separates secure analytics environments from vulnerable ones is not the tools they purchase-it’s the governance framework they enforce from day one.

Building a Security-First Analytics Architecture

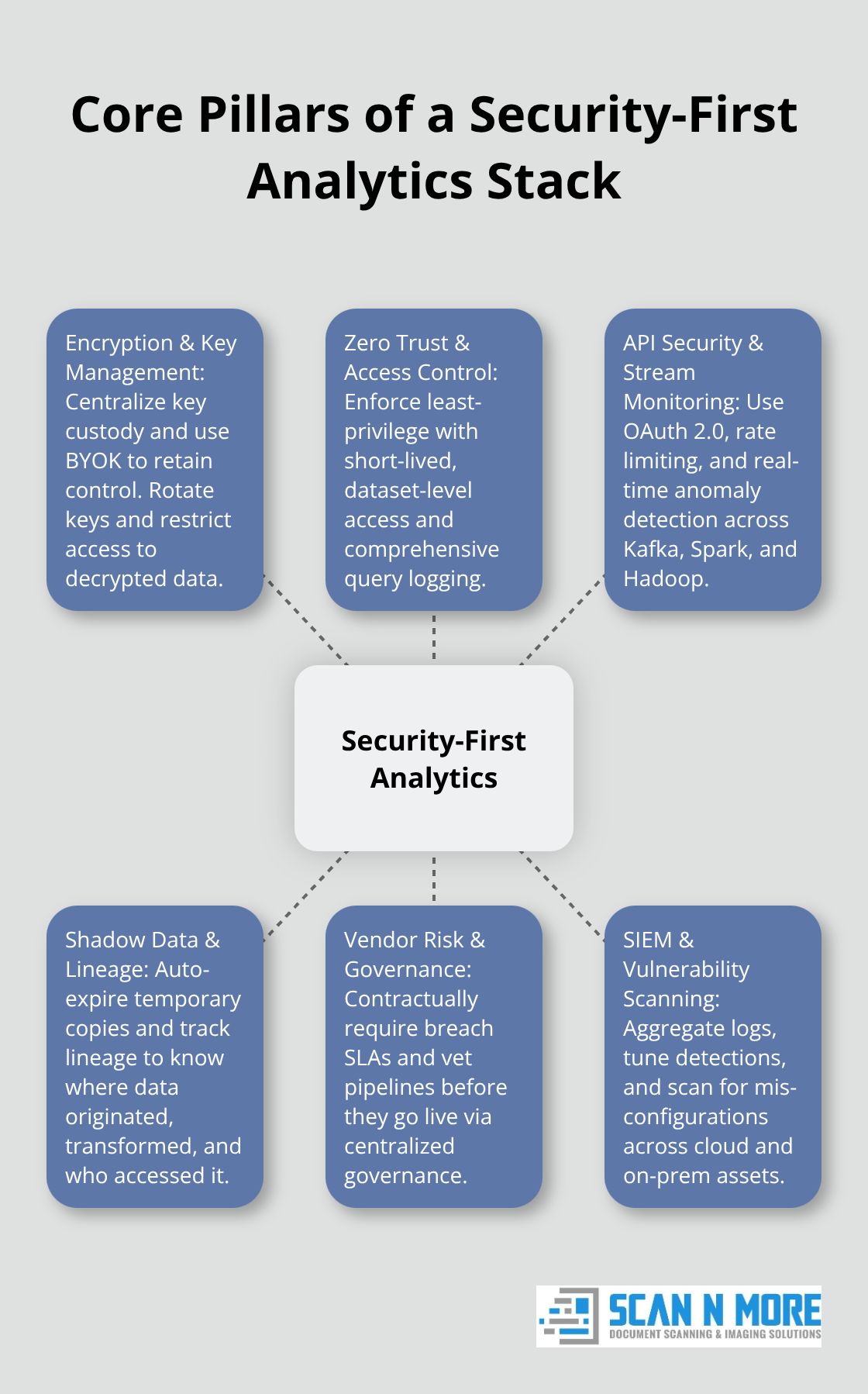

Encryption, Key Management, and Access Control

Encryption and access controls form the foundation, but implementation matters far more than the decision to deploy them. Most organizations encrypt data at rest and in transit, then stop there. The real vulnerability emerges in how you manage encryption keys and who gets access to decrypted data. Centralized encryption key management systems prevent scattered credentials from becoming attack vectors, and bringing your own keys into cloud environments gives you control that shared key models cannot match.

Role-based access control fails without enforcement mechanisms. Zero-trust architecture adoption jumped to 70 percent across enterprises in 2025, yet most implementations only protect network perimeters, leaving analytics datasets wide open. Dataset-level controls with short-lived access windows of four to eight hours and comprehensive query logging catch unauthorized access attempts that traditional firewalls miss entirely. When a data scientist requests access to production customer data, the system grants it for exactly the time needed, then revokes it automatically. This prevents the lingering permissions that insider threats exploit.

Securing APIs and Real-Time Data Flows

OAuth 2.0 token-based authentication with rate limiting on API endpoints stops attackers from rapidly probing your Kafka, Spark, or Hadoop frameworks for weaknesses. Insecure APIs in these systems remain a primary entry point, so API activity logging with real-time alerts transforms passive infrastructure into an active defense layer. Real-time monitoring separates organizations that catch breaches in hours from those that discover them in months.

Distributed analytics systems process thousands of events per minute, and traditional log analysis cannot keep pace with this velocity. Stream security monitoring tools detect anomalies as data flows through pipelines rather than waiting for batch analysis after damage occurs.

Managing Shadow Data and Data Lineage

Shadow data copies represent your biggest blind spot-temporary extracts for testing, ad-hoc exports, and contractor datasets that nobody officially tracks. Implement automatic expiration rules that delete temporary data copies after defined periods, then audit what actually gets retained. Data lineage tracking shows where every dataset originated, how it was transformed, and who accessed it, which transforms incident response from guesswork into precision. When a breach notification arrives, you need to know exactly which customers were affected within minutes, not days.

Vendor Risk and Data Governance

Third-party risk dominates breach statistics at 47 percent of incidents, making vendor security audits non-negotiable. Require data processing agreements with specific breach notification service level agreements, not vague promises. The Hertz and Cleo case demonstrated how vendor negligence cost Hertz 2.74 million dollars in direct costs plus approximately 8 million dollars in lost customer lifetime value because security requirements were missing from contracts. Data governance frameworks prevent these failures by establishing ownership, classification standards, and security vetting before any new data pipeline goes live. Organizations using centralized data catalogs eliminate the rogue infrastructure that typically accounts for the largest portion of unmanaged risk. Quarterly policy reviews aligned to regulatory changes ensure your controls match current requirements rather than last year’s standards. These governance practices create the operational discipline that transforms security from a reactive expense into a competitive advantage, positioning your organization to respond faster when threats emerge.

Real Tools That Actually Stop Data Breaches

The technology stack you deploy matters far less than how you configure and monitor it. Organizations waste millions on enterprise firewalls and SIEM platforms that sit misconfigured, producing false positives until security teams ignore alerts entirely. Effective data protection requires tools matched to your actual threat landscape, not vendor marketing.

Stream Monitoring and API Security

Stream security monitoring systems like Apache Metron and Splunk detect anomalies in real-time data flows as events move through Kafka or Spark, catching unauthorized access patterns within seconds rather than discovering them during post-breach forensics. Traditional network firewalls cannot inspect encrypted analytics traffic, so you need API gateways that enforce OAuth 2.0 token-based authentication, rate limiting, and comprehensive activity logging on every endpoint. When your Hadoop cluster processes 30,000 events per minute, a gateway with real-time alerting stops attackers from probing for weaknesses while batch-based detection remains inactive.

Data Loss Prevention and Insider Detection

Data loss prevention solutions that only scan email and USB drives miss your actual exfiltration paths. Modern DLP must monitor outbound traffic patterns, flag unusual data transfers from your warehouse to external IPs, and integrate with next-generation firewalls to block suspicious activity instantly. Insider threat detection requires analyzing logs from common access points-Remote Desktop Protocol, VPN, Active Directory, and endpoints-to spot when users suddenly access datasets they’ve never touched before or transfer gigabytes to personal cloud storage.

SIEM Platforms and Vulnerability Scanning

Security Information and Event Management platforms aggregate logs from firewalls, databases, cloud platforms, and endpoints into a single investigation workspace, but only if you configure them correctly. Most organizations collect terabytes of logs monthly yet lack the expertise to tune detection rules, resulting in alert fatigue that masks real threats. IBM’s 2024 Cost of a Data Breach Report found that AI and automation-powered security tools save approximately 2.22 million dollars per breach compared to manual response. Vulnerability management tools like Qualys and Rapid7 scan your entire analytics infrastructure-cloud storage buckets, API endpoints, database servers, Kubernetes namespaces-and flag misconfigurations before attackers find them.

External Audits and Key Management

Quarterly security audits from external partners cost between 50,000 and 150,000 dollars annually but identify blind spots your internal team misses. Cisco’s 2025 Data Privacy Benchmark found that 96 percent of respondents confirmed security initiatives’ benefits outweighed costs, making outsourced audits a measurable investment rather than pure expense. Encryption key management systems prevent scattered credentials from becoming attack vectors. Cloud providers offer BYOK options that let you maintain control while avoiding the overhead of on-premises hardware security modules.

Governance and Operational Ownership

Selecting tools becomes worthless if your team cannot operate them effectively. Governance frameworks that assign clear ownership-CISO, Data Security Officer, Chief Data Officer, and Data Governance Team-transform technology into actual protection. These roles establish accountability for configuration, monitoring, and response across your entire analytics environment.

Final Thoughts

Big data analytics security issues demand immediate action, not delayed planning. Organizations that treat security as an afterthought face breach costs averaging 4.88 million dollars, regulatory penalties around 2.8 million per incident, and customer trust erosion that lasts years. The strategies outlined here work because they address the actual vulnerabilities in your environment rather than theoretical threats. Encryption and access controls form your foundation, but only when you implement them with dataset-level granularity and short-lived access windows that expire after four to eight hours.

Real-time monitoring catches breaches in hours instead of months, and data governance frameworks eliminate shadow IT before it becomes an attack vector. AI-powered security tools save approximately 2.22 million dollars per breach compared to manual response, while external audits cost between 50,000 and 150,000 dollars annually yet identify blind spots your internal team misses. Proactive privacy-mature companies report 40 percent fewer breaches and 15 to 20 percent higher customer lifetime value, making security investments pay for themselves through prevented incidents and operational efficiency. Start by assigning clear ownership to your CISO, Data Security Officer, and Chief Data Officer, then implement centralized data governance with mandatory security vetting before any new pipeline goes live.

Twenty U.S. states enforce privacy statutes, and analytics-specific breaches accounted for 68 percent of 2026 enforcement actions, making compliance non-negotiable for organizations handling sensitive data. We at Scan N More understand this challenge through our professional document scanning services, which transform paper-based processes into digital solutions while maintaining data security and compliance standards. Whether you manage legal documents, medical records, or sensitive business files, secure digitization prevents vulnerabilities before they start, and our hard drive destruction services protect your organization during the transition to digital environments.